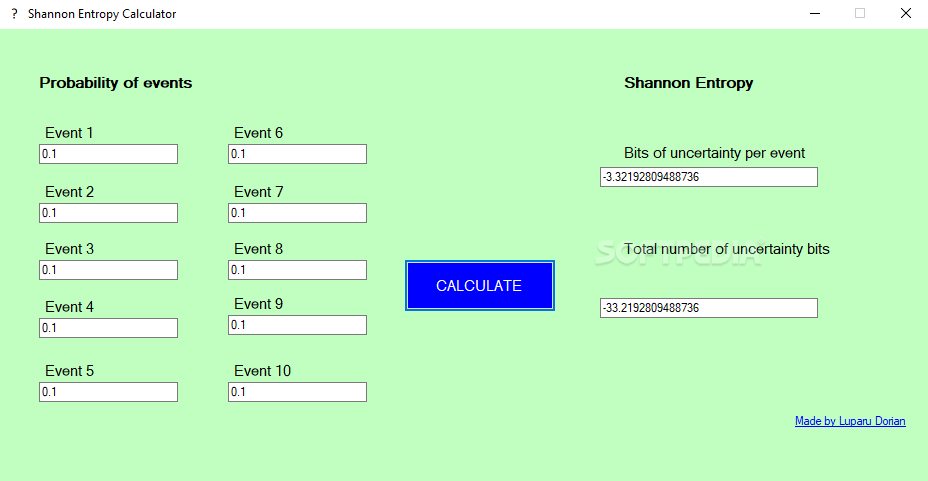

This suggests that increased HRV in these unhealthy states may actually be irregularity of a more chaotic and random coordinative level of lost health. Our own pilot investigation has shown that Chiropractic intervention reduces HRV in enuretics, but increases complexity. It also allows a window to comprehend why some medically-diagnosable states, such as nocturnal enuresis, eating disorders, or duodenal ulcers tend to exhibit a higher HRV than controls groups free of those issues. This is of utmost importance in pre-/post-intervention research, as it can now be shown if an intervention offers an increased level of healthy coordination in subjects. Using the three entropy metrics provided by this website, it is now possible to understand the level of healthy coordination in a subject. It was impossible to determine if a change in irregularity was a positive or a negative for the living system. Until recently it has only been possible to show greater levels of irregularity. Is it a change in complexity, or is it merely becoming more random? 3, 4 As arrythmia is an example of a poorly adapting system with highly random interbeat intervals, a scientifically reliable entropy metric must show ability to differentiate this chaotic signal from healthy subjects, as well as from elderly subjects, which are on the opposing end of the regularity spectrum, exhibiting rigid periodicity. 2 Studies of arrythmia subjects and healthy controls have shown questionable results, often failing to differentiate the chaotic nature of the arrythmia ECG signals from those of young healthy subjects. Researchers have tested the prior standard entropy values to separate irregularity into categories of randomness versus complex coordination underlying a measured signal. 1 While irregularity may be described with some accuracy, the meaning behind it has previously been difficult to accurately ascertain. Entropy gives the researcher information concerning the regularity of a signal. The need for non-linear methods of measuring the complex nature of the signal is therefore vital to gain the full nature of the physiological state. Physiological signals are both non-stationary and non-linear. We hope this guide has been helpful.Distribution Entropy, Phase Entropy, & Multiscale Distribution Entropy Physiological Time Series Calculator Why is entropy an important consideration in assessing physiological time series? So, you can use this measure to understand the structure and predictability of your data. Remember, the higher the Shannon entropy, the more uncertain or random the data is. This is a powerful tool in your data science toolkit, allowing you to quantify the uncertainty or randomness in your data. ConclusionĪnd there you have it! You’ve successfully calculated the Shannon entropy of an array using Python’s NumPy library. This formula calculates the sum of the product of the probability of each element and the base-2 logarithm of the probability of each element, and then negates the result. Getting Started with NumPyīefore we dive into the calculation of Shannon entropy, let’s ensure that you have NumPy installed. It’s an essential tool for any data scientist working with Python. It provides a high-performance multidimensional array object and tools for working with these arrays. NumPy is a powerful Python library for numerical computations. The higher the entropy, the more uncertain or random the data is. It’s widely used in information theory to quantify information, uncertainty, or randomness. Shannon entropy, named after Claude Shannon, is a measure of the uncertainty or randomness in a set of data. In this blog post, we’ll guide you through the process of calculating the Shannon entropy of an array using Python’s NumPy library. One of the most common types of entropy used in data science is Shannon entropy. It’s a measure of uncertainty, randomness, or chaos in a set of data. In the world of data science, entropy is a crucial concept.

| Miscellaneous Calculating Shannon Entropy of an Array Using Python’s NumPy

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed